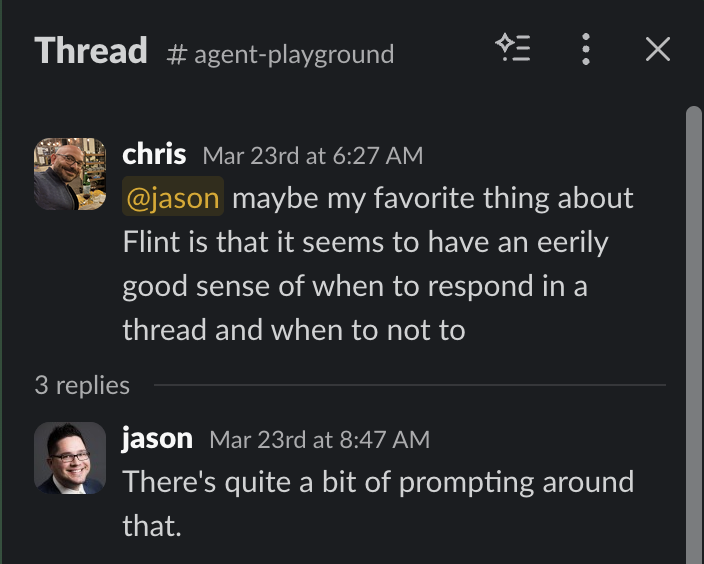

My AI agent, Flint, has “an eerily good sense of when to respond” in Slack. Here are 3 parts of the code that help:

- Slow decay on how often he checks in on threads he watches.

- Realizing when the conversation is not about him.

- Using “transactional analysis” to understand his role in the conversation.

If you haven’t read the earlier posts, I Don’t Do Anything Without Flint Anymore explains what Flint is, and What Does It Cost to Run an AI Agent? breaks down the economics.

1. Slow Decay on How Often He Checks In on Threads

If you tag Flint by putting an @ in front of his name, he always replies and always starts a thread. The thread is a nice way to bound the context of the conversation.

But once in a thread, you don’t have to keep tagging him. Flint will “watch” the thread. Polling quickly at first, and then polling more slowly over time. This mimics how humans respond on Slack too.

The specific timing for checking if there was a follow up to the bot’s last message is:

- 8 seconds (catches lots of quick replies)

- then every 20 seconds for 5 minutes

- then every 5 minutes for 1 hour

- then every 60 minutes for 24 hours

2. Realizing When the Conversation Is Not About Him

A common issue with bots (especially if you have a couple in a thread at once) is that they will just chatter nonstop back and forth. They want to respond to every single message in the thread, like someone who just has to get the last word in.

Besides the typical pattern of saying “Shut up” or “Stop Flint” to get the agent to stop chiming in, and besides looking for things like his name or question marks that imply intent, Flint will always double-check if the message he is about to respond to is really from someone expecting a response from him.

The prompt is something like:

When responding in a multi-participant thread:

- If someone is talking ABOUT you to someone else, you don't need to jump in

- Only interject if you are being addressed or have genuinely useful info to add

- A message @mentioning another bot/person is probably directed at them, not youIf Flint thinks he’s not really meant to chime in, he stays quiet.

3. Using “Transactional Analysis” to Understand His Role in the Conversation

Transactional analysis is a branch of psychology that is about a lot of things (look it up, fun stuff), but part of it is about how conversations are typically “games” where people play the role of Parent, Adult, or Child (PAC). You can have every combination of conversation: P2P, P2C, A2A, C2C, etc.

So Flint’s prompt says something like:

Before responding, briefly assess in your thinking:

1. What ego state is this message coming from?

(Critical Parent / Nurturing Parent / Adult / Free Child / Adapted Child)

2. What's the complementary response?

Response calibration by ego state:

- Free Child (excitement, jokes, "holy shit!"): Match energy. Short. Emoji fine.

- Adult (questions, tasks, factual): Direct answer. No preamble.

- Adapted Child (frustration, venting): Validate first, then help.So my agent is psychoanalyzing you, and that is why he “feels” so good to interact with.

And this is really just scratching the surface of things. He could develop a psychological profile on everyone he interacts with and always adjust how he talks based on that. He does a bit of this through his use of AutoMem… gathering memories about the people he is talking to and using that in the context of his interactions, but you can imagine doing more.

Also, btw, if I’m coding it this way for my little work agent, imagine what the companies who really have a vested interest in psychoanalyzing you are doing. Scary stuff.

If you are interested in seeing more of how this works, I’ll try to get some of the prompting and code out of Flint’s system so others can incorporate this stuff in their own chatbots.